The Evolution

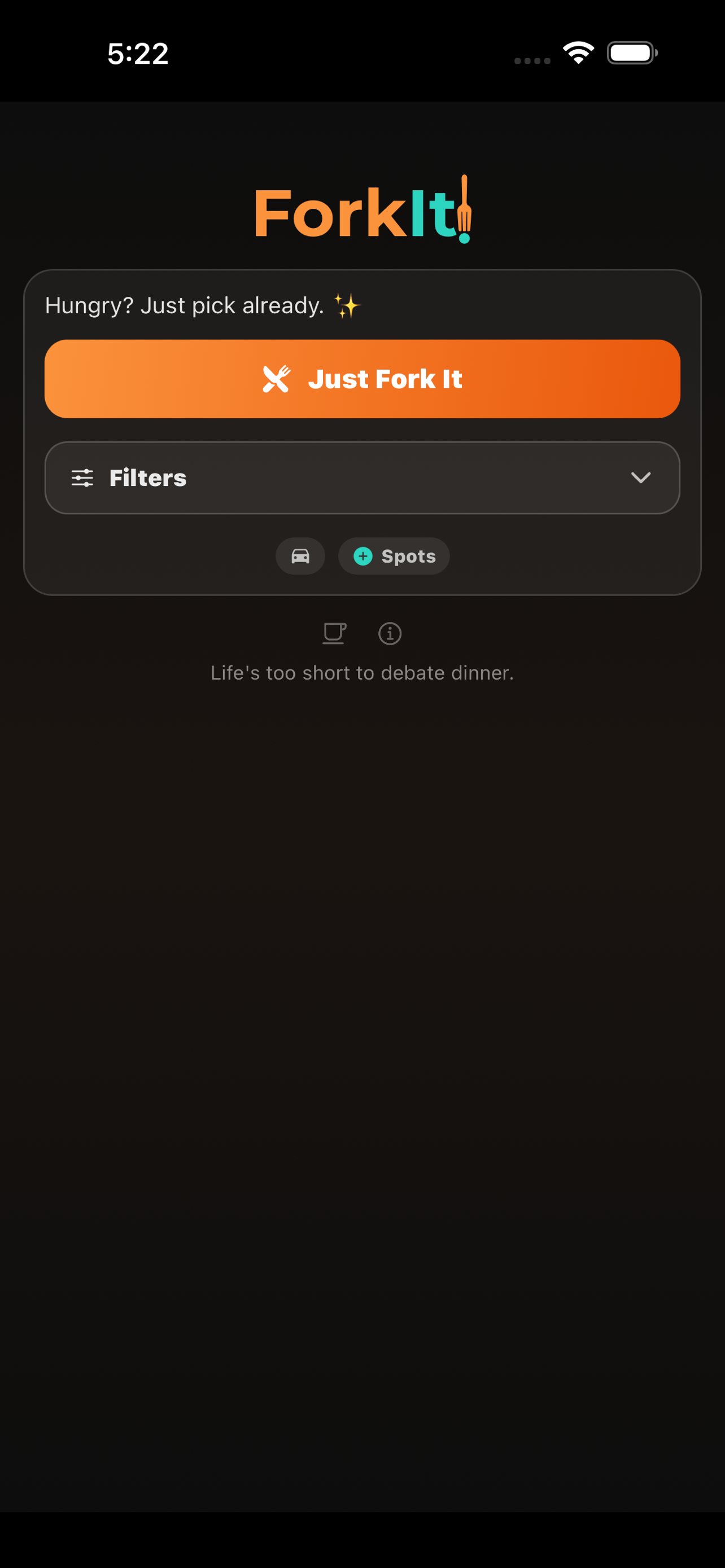

The Problem

"Where should we eat?"

Nobody could ever decide. Existing apps wanted me to scroll through ads and sponsored listings. I just wanted someone to pick for me.

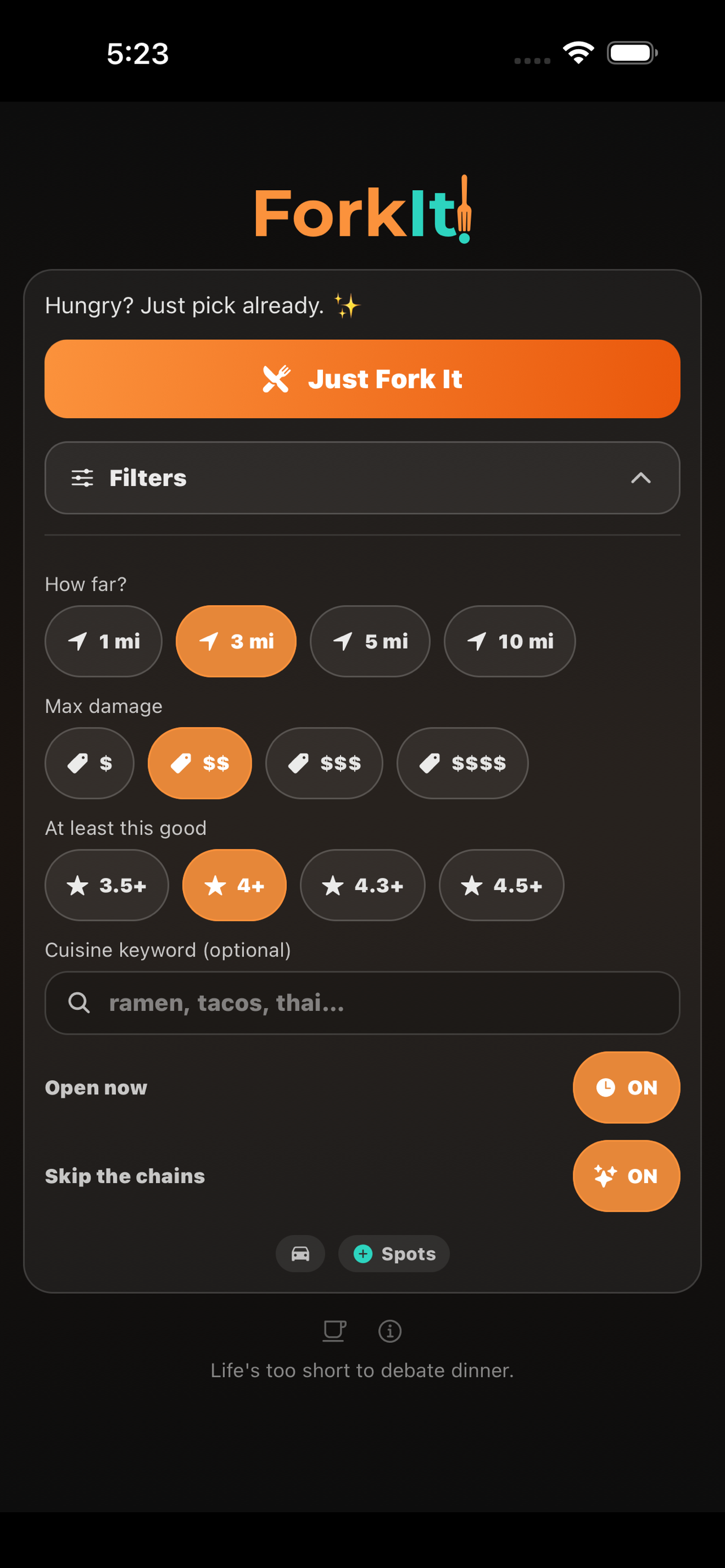

The Core Product

One button. Set your filters: how far, how expensive, what cuisine, what rating, open now, skip the chains. Tap the button. Get a restaurant.

The filters aren't a feature; they're the point.

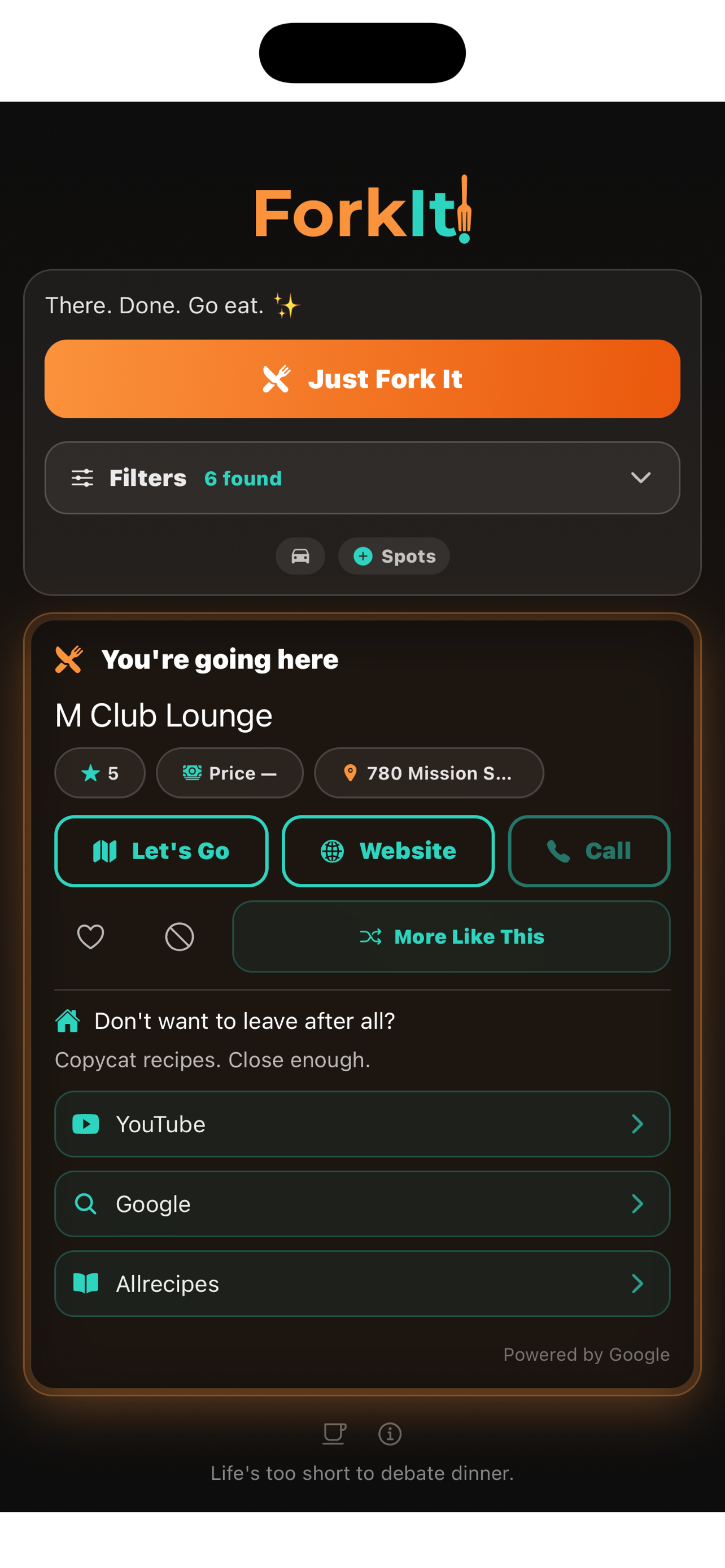

The Result

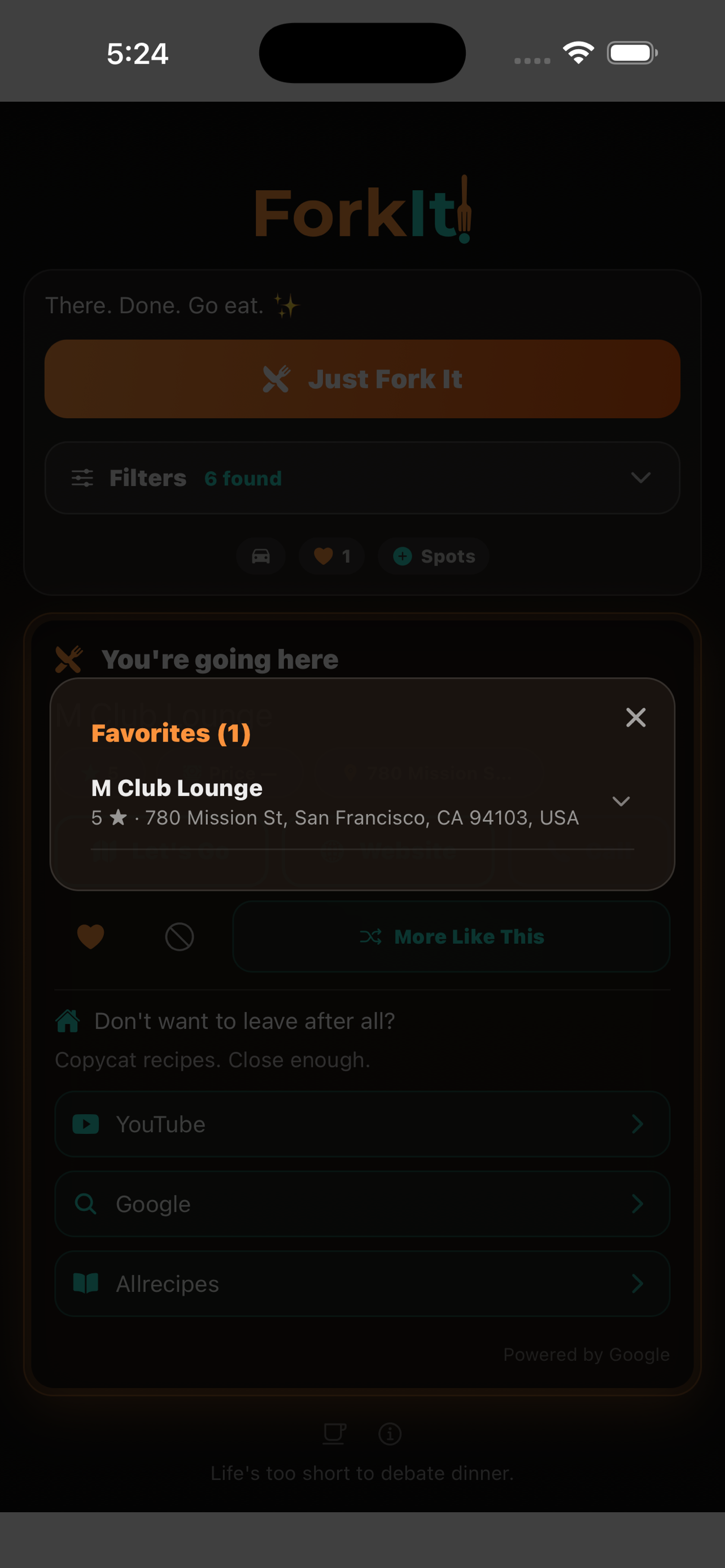

Name, address, rating, whether it's open. Directions, Website, Call. Save it or block it so it never comes up again.

Copycat recipes if you don't want to leave after all.

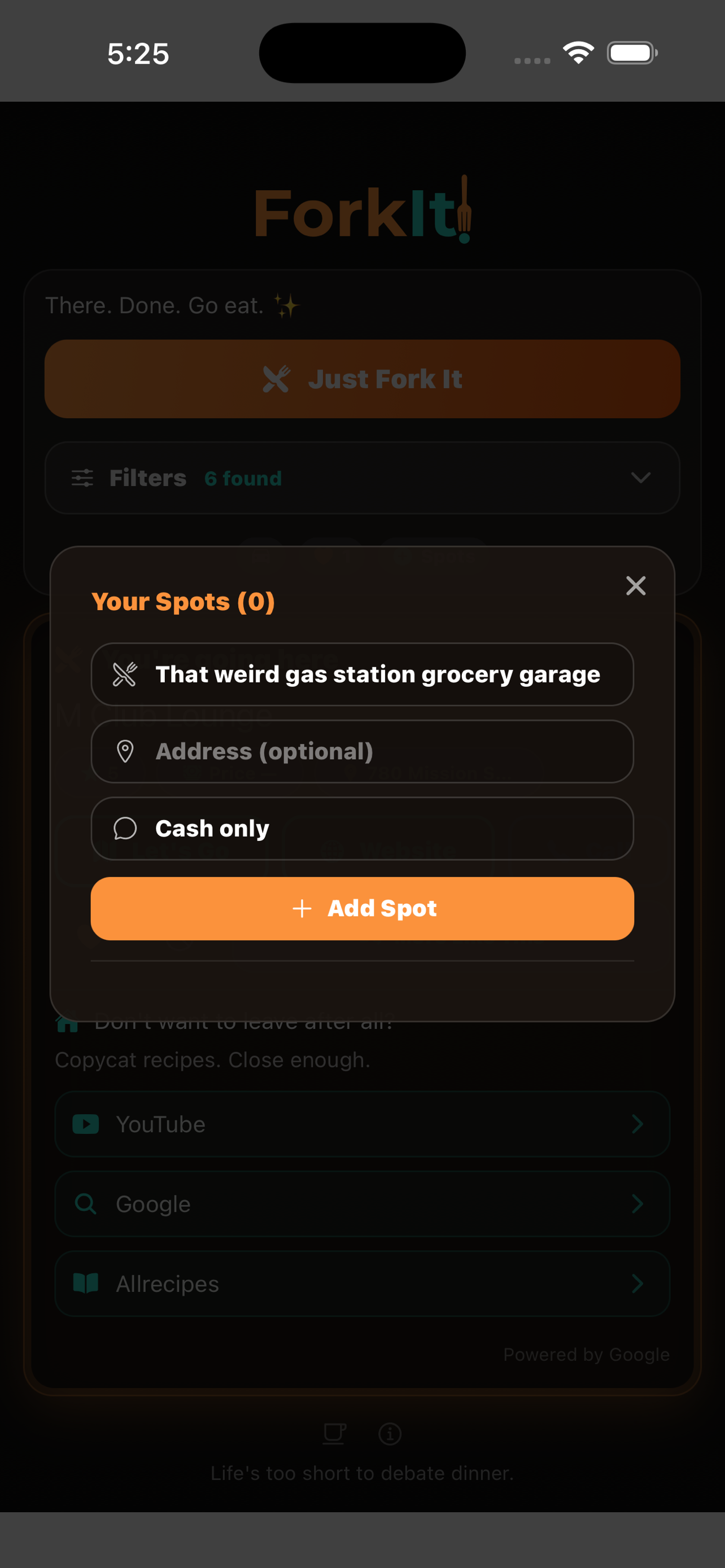

Your Spots

Google doesn't know about every good place. Custom spots let you add "that weird gas station grocery garage" or the taco truck that's only there on Thursdays.

"Can My Friends Use It Too?"

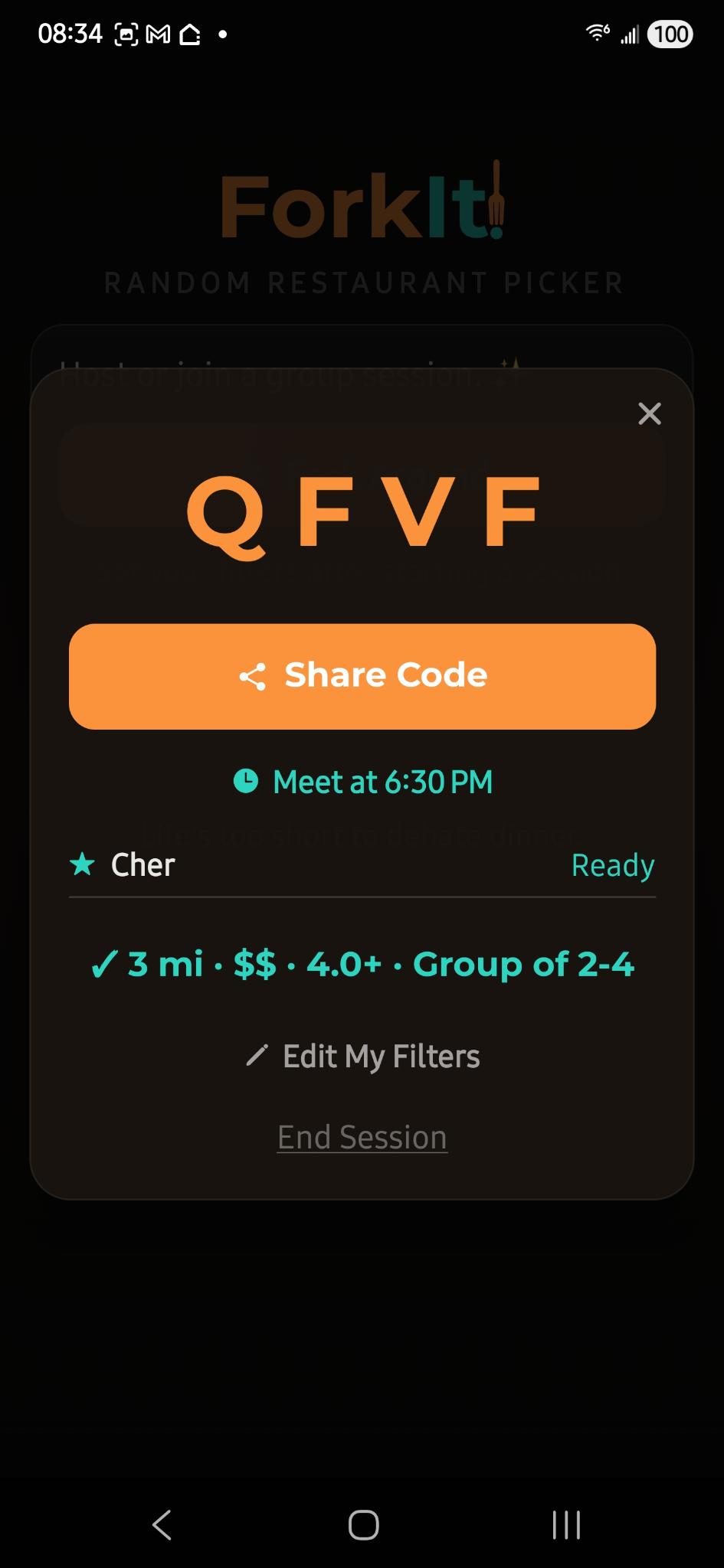

Fork Around: real-time group sessions. Host creates a session, gets a 4-letter code. Everyone submits filters. Friends join from a browser, no app required. The most restrictive filters win, and the app picks one restaurant at random.

The decision is made. Nobody's fault. Move on with your life.

Then It Got Complicated

User accounts. Cloud sync. Subscriptions. Favorites. History. The app went from one file to 13 services talking to each other.

That's when things started breaking in ways I couldn't just "paste the error" to fix.

Swipe or use arrows